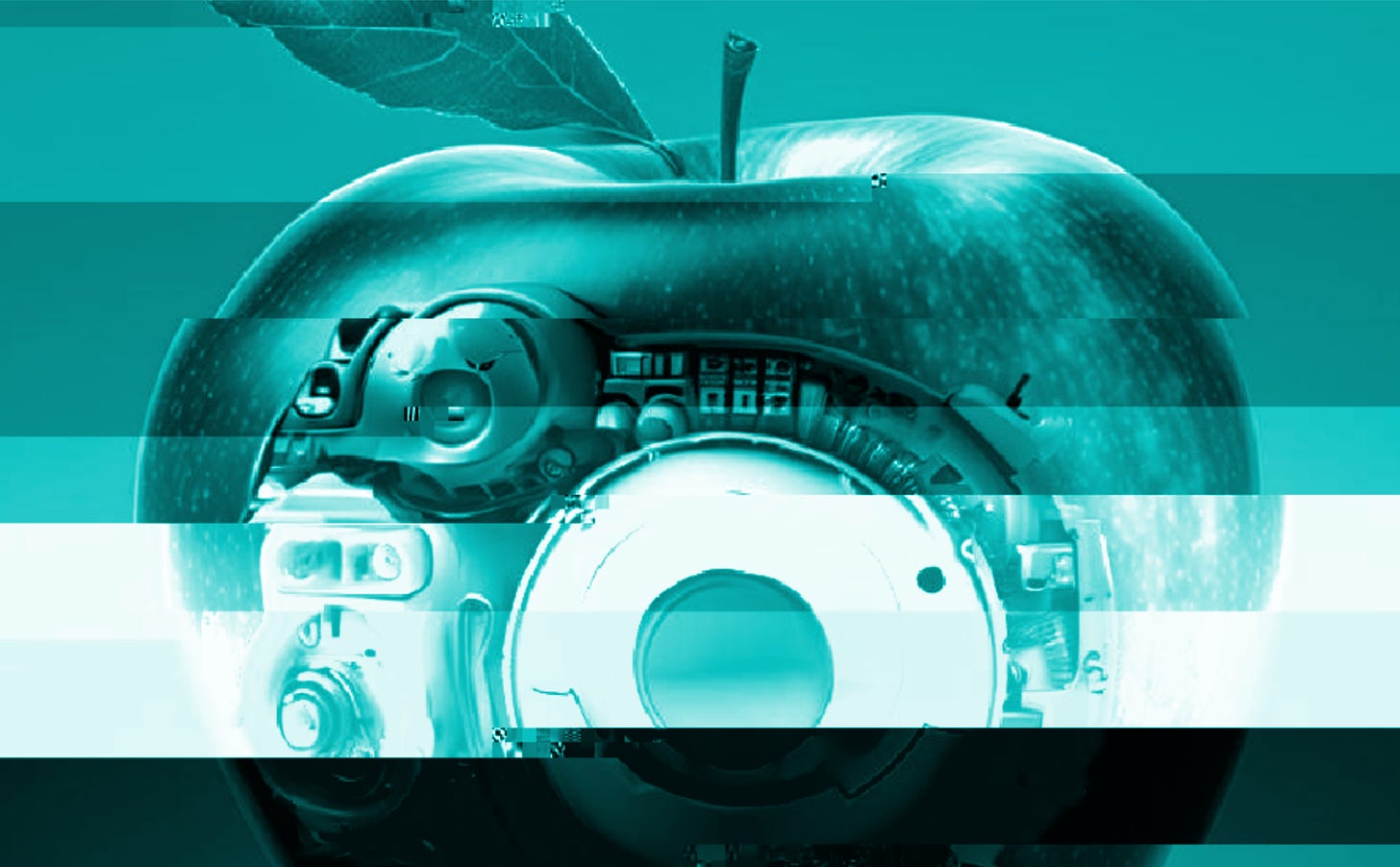

How Apple Intelligence exposes global fault lines over the future of AI.

Inferences from Minerva Technology Policy Advisors. Vol.18 - 18 June, 2024

Last week, Apple previewed a set of new AI-powered products at its annual developers’ conference. Nearly two years after the launch of ChatGPT took the world by storm, it was an opportunity for one of the world’s most powerful companies to show how it planned to harness this revolutionary and geopolitically disruptive technology to *drumroll please*… do routine stuff a bit faster.

Most headlines about the announcement focused on Apple’s plans to integrate its voice assistant Siri with Open AI’s ChatGPT; similar to the way other companies, including Microsoft, have emphasized their efforts to incorporate large language models into products like software copilots.

The bigger story is that many of the new features that Apple unveiled will be powered by smaller models, aimed at narrow tasks and capable of running natively on an iPhone.

At a time when breathless commentators are speculating that massive generative models will render Hollywood obsolete and drive thousands of corporate roles to extinction, one of the most innovative companies on Earth is more focused on using AI to help sort out your calendar, touch up a photo, or make a new emoji. What’s going on?

Inference: Apple is betting that getting innovation with a small i right is more important for the future of its business than Innovation with a capital I. It’s a different way of looking at artificial intelligence that also helps expose the global fault lines in AI governance.

The big-I view holds that the rise of generative artificial intelligence is an unprecedented technological leap that will be a game-changer for business and geopolitics. Ever-bigger models, trained on massive amounts of data and capable of performing many different tasks at an expert level will disrupt entire industries and may even pose existential risks for humanity. Winning in big-I innovation requires major investments in compute, data, and energy resources needed to run the most powerful and cutting-edge models. The enormous risks and opportunities of frontier AI likewise demand a big policy response, including sweeping new rules for AI and government strategies for promoting national champions.

Viewed through a small-i innovation lens, the AI revolution looks very different. Foundational models are important new tools, but they also have serious limitations and aren’t always fit for the job. The real value of AI will come not from the pursuit of ever-bigger models, but from experimenting with how to apply many different varieties of AI to solve well-defined practical problems. The AI model itself may be just one component of a much larger technology system (in Apple’s case, the iPhone and its related software suite). Mastering small-i innovation requires deep sector and domain expertise. It also calls for more flexible regulatory strategies that encourage experimentation and account for the specific context in which a technology is being used.

These different ways of looking at AI innovation don’t just explain what is different about “Apple Intelligence,” they also illuminate why it’s so hard to get countries to align on governance.

Criticisms that the US is not moving fast enough on AI regulation, such as this recent essay in Foreign Policy, arguably take a big-I innovation view: AI is a big deal and there is an urgent need for firm guardrails now. Small-i innovation would suggest that it’s too early in the AI revolution for sweeping new rules, and that regulating specific uses of AI under existing laws is more likely to strike the right balance on opportunity and risk.

Of course, big-I innovation and small-i innovation aren’t mutually exclusive; they exist side-by-side. Up to now, big-I innovation has arguably dominated the agenda in company boardrooms and in global capitals. Apple’s embrace of a more small-i approach to AI innovation is an indication that the pendulum may be swinging back.

What we’re reading:

Information about the newly launched round by the NATO Innovation Fund; backing AI, robotics and space tech in Europe.

Reporting on whether Los Angeles schools will become the first to impose a city-wide ban on smartphones for students.

A conversation with White House Office of Science and Technology Policy Director Arati Prabhakar; for a dose of optimism on the potential of AI to achieve vital developments in energy and healthcare.

What we’re looking ahead to:

15 - 18 July: IEEE International Conference on Artificial Intelligence Testing in Shanghai, China.

22 July: Priorities for AI policy and regulation in the UK Forum.

22 - 23 September: UN Summit of the Future.

10 - 11 February 2025 : AI Action Summit in Paris, France.